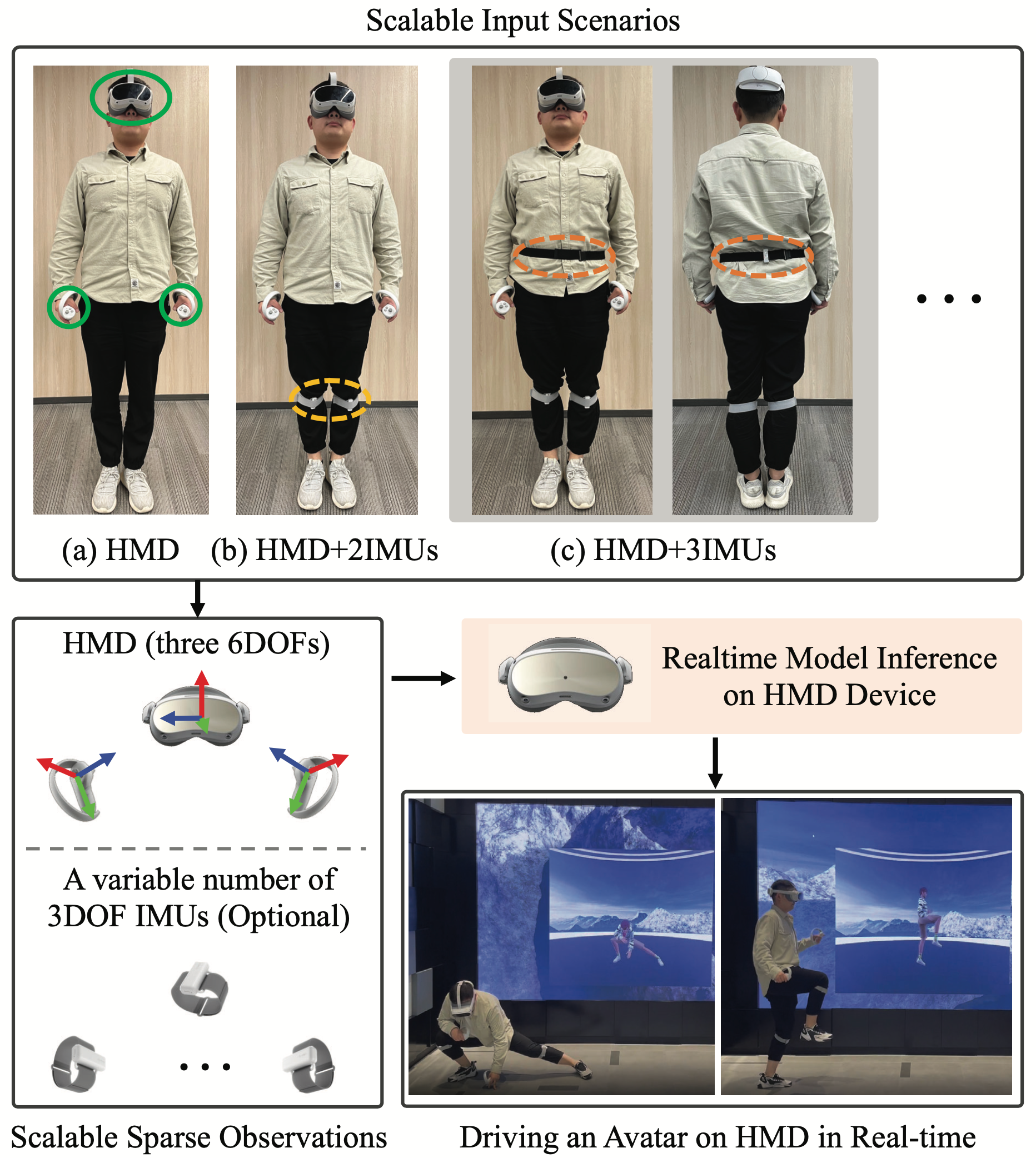

It is especially challenging to achieve real-time humanmotion tracking on a standalone VR Head-Mounted Display (HMD) such as Meta Quest and PICO. In this paper, we propose HMD-Poser, the first unified approach torecover full-body motions using scalable sparse observations from HMD and body-worn IMUs. In particular, it can support a variety of input scenarios, such as HMD,HMD+2IMUs, HMD+3IMUs, etc. The scalability of inputs may accommodate users’ choices for both high tracking accuracy and easy-to-wear. A lightweight temporal-spatial feature learning network is proposed in HMD-Poser to guarantee that the model runs in real-time on HMDs. Furthermore, HMD-Poser presents online bodyshape estimation to improve the position accuracy of bodyjoints. Extensive experimental results on the challenging AMASS dataset show that HMD-Poser achieves new state-of-the-art results in both accuracy and real-time performance. We also build a new free-dancing motion datasetto evaluate HMD-Poser’s on-device performance and investigate the performance gap between synthetic data andreal-captured sensor data. Finally, we demonstrate our HMD-Poser with a real-time Avatar-driving application on a commercial HMD. Our code and dataset are available at Here.

Live Demo: HMD+3IMUs

Live Demo: HMD+2IMUs

Live Demo: HMD-Only

Visualization Of HMD-Dancing Dataset GT

@inproceedings{

daip2024hmdposer,

title={HMD-Poser: On-Device Real-time Human Motion Tracking from Scalable Sparse Observations},

author={Dai, Peng and Zhang, Yang and Liu, Tao and Fan, Zhen and Du, Tianyuan and Su, Zhuo and Zheng, Xiaozheng and Li, Zeming},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

year={2024}

}